The prospect of nuclear war continues to threaten the existence of humanity and the long-term viability of our societies. Today’s evolving security landscape begs novel approaches to both new and long-standing challenges.

Rapid adaptation of immensely powerful technology-driven techniques and platforms by governments and militaries reveals not only the need for novel discussion to be engendered around nuclear risk reduction and emerging technologies, but also the need to cultivate an entirely new global technical and policy community to devise the necessary frameworks to reduce catastrophic risks and prevent the outbreak of nuclear war.

Our initiatives focus on creating venues and work streams where current nuclear experts and decision-makers can step out of their existing domains and place themselves side-by-side with deep technical experts in otherwise disparate practice areas. Our initiatives focus on creating novel approaches to nuclear crisis control, rethinking nuclear deterrence, and assessing the impacts of emerging and disruptive technologies on nuclear strategy and policy.

Current Projects

CATALINK

IST, together with global policy and technical experts and the political and financial support of the Swiss and German governments, developed CATALINK, an internationally-driven, simple, secure, resilient communications capability. Building on the “hotline” model of previous generations, CATALINK relies on open source technologies to maximize user integrity and trust.

AI and Nuclear Command, Control, and Communications

Today’s AI is more advanced and powerful than ever, directly affecting strategic stability and the offense-defense balance. Nuclear-armed states are investing in advanced AI tools and capabilities and exploring ways to leverage AI for military advantages. The complexity of nuclear command, control, and communication (NC3) systems already creates significant challenges directly impacting nuclear deterrence. With the exponential growth in AI capabilities, global security rests in a delicate balance. IST’s work is urgently needed to raise awareness, foster dialogue, and establish frameworks for stable and predictable practices. In partnership with Longview Philanthropy, IST is pioneering action-oriented efforts to explore how advanced artificial intelligence (AI) capabilities will be integrated into nuclear command, control, and communications (NC3) systems and subsystems.

Andrew Carnegie AI-Nuclear Policy Accelerator

As AI applications are increasingly integrated into nuclear weapons systems and related subsystems—including early warning, decision support, intelligence and predictive analysis, and other military platforms—nuclear policy professionals face a growing challenge: policy debates are accelerating faster than practitioners’ direct exposure to how modern AI tools and systems are actually built, tested, and deployed. Funded through a philanthropic grant from Carnegie Corporation of New York, the Andrew Carnegie AI–Nuclear Policy Accelerator is designed to close this gap by providing mid-career professionals in the national security space with hands-on technical literacy and applied engagement. The Accelerator will train and support nuclear policy practitioners drawn from government, international organizations, the military, think tanks, and academia.

Security Level 5 Task Force

A safe future routes through strong security and containment of frontier AI development. The SL5 Task Force is collaborating with over 50 participants–including AI lab decision-makers, national security leaders, data center operators, chip providers, security researchers, program managers, and engineers, to develop a technical roadmap and standard for achieving Security Level 5 (SL5) in artificial intelligence: cyber, physical, insider, and supply chain security capable of withstanding operations from the most capable nation-state actors. Led by Lisa Thiergart and Philip Reiner, the SL5 Task Force’s mission is to “create the optionality for U.S. AI Labs to reach security level 5 in the coming years, and to be able to activate SL5 within 3-6 months of choosing to do so.”

Recent Content

Past Projects

How will AI-related techniques have an impact on international security and stability, and what needs to be done to avoid unintended consequences?

[2017-2019]

Understanding and managing the long-term opportunities and risks posed by AI-related technologies for international security and warfare

[2017-2019]

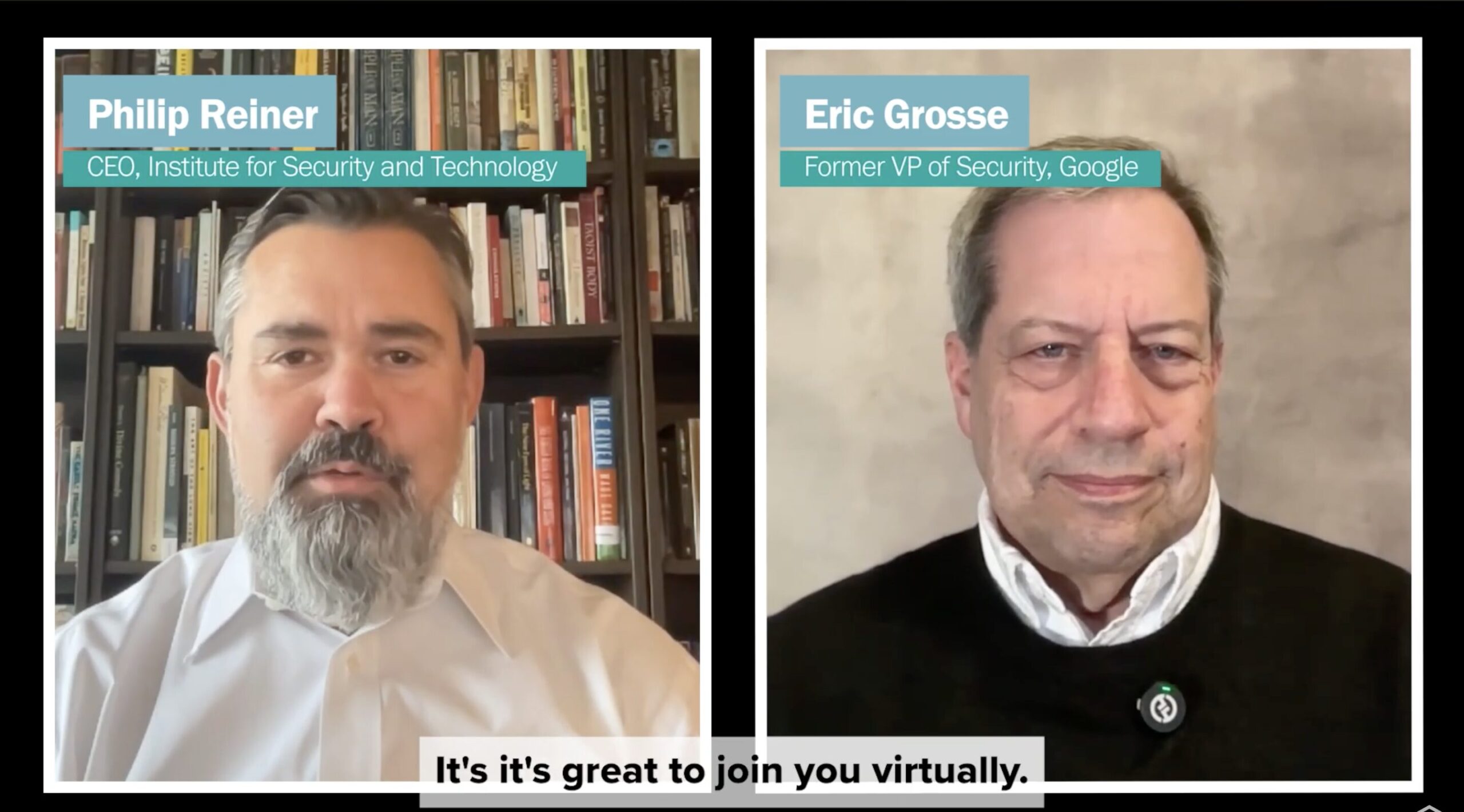

A podcast dedicated to unpacking NC3, one of the most complex systems in the world today, with Philip Reiner and Peter Hayes

[2019-2020]

If and when NC3 systems fail under stress, leaders must be able to communicate. Today’s NC3 systems rely on both legacy and modern technologies that are increasingly vulnerable to rapidly emerging, disruptive capabilities.

[2020-2022]

Outlining the global effects of nuclear modernization and advanced technologies

[2019-2022]

What are the risks of social media interacting with the early warning systems of nuclear-armed states? Could the resulting potential changes in the dispensation of leaders actually—and likely inadvertently—lead to war?